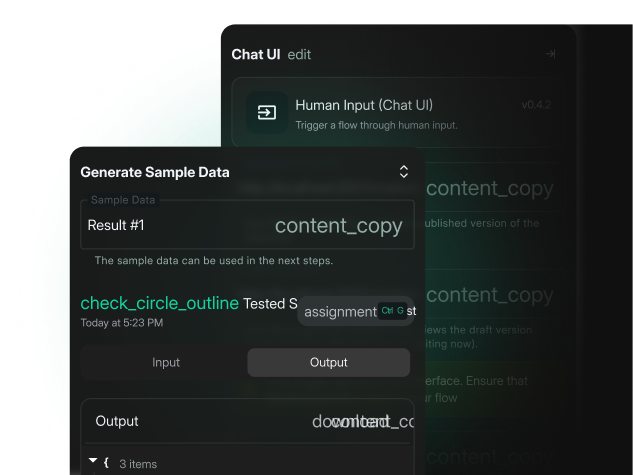

Advanced AI Observability

Full-stack observability from infrastructure to AI. Every signal in one place. Every cost tied to the workload that caused it.

Centralized Dashboard

Infrastructure to Application Correlation

Predictive Alerts and Incident Management

Alert Fatigue

190 alerts are ignored. One real outage is buried. We alert on service health, not raw spikes. Your phone stays silent unless the app breaks.

Signal Fragmentation

Metrics in one tool. Traces in another. You can't see the link. We correlate every signal. If latency spikes, you know if it’s the model or the GPU.

No Ownership Routing

An alert fires. Nobody moves. We route incidents to the exact team who can fix them. Platform, App, or AI.

No Path to Excellence

Stop firefighting. We build a specialized Center of Excellence to own observability across your organization.

"The OmniOps team demonstrated exceptional commitment… Their expertise and dedication to building a secure and reliable Google Cloud Platform environment were key to the project’s success "

Stop Guessing. Start Seeing.

Tell us what you're running. We'll tell you what it takes.

Request Observability AssessmentFrequently Asked Questions

First dashboards and alerts typically land within weeks of instrumentation. Full implementation depends on scope and access cycles.

Yes. We instrument on-prem, cloud, hybrid, and air-gapped workloads.

Yes. LLM response times, token costs, GPU utilization, and VectorDB performance. For on-prem and air-gapped deployments, coverage includes model request visibility, token categorization, cost monitoring, and RAG performance. If you run Bunyan or Rekaz, the AI observability layer integrates directly

Managed service is available by contract. Coverage depends on scope and delivery model.

One incident example. Current dashboard access. Ownership contacts. We scope from there.